SolidityBench by IQ has launched as the primary leaderboard to guage LLMs in Solidity code era. Accessible on Hugging Face, it introduces two modern benchmarks, NaïveJudge and HumanEval for Solidity, designed to evaluate and rank the proficiency of AI fashions in producing sensible contract code.

Developed by IQ’s BrainDAO as a part of its forthcoming IQ Code suite, SolidityBench serves to refine their very own EVMind LLMs and examine them in opposition to generalist and community-created fashions. IQ Code goals to supply AI fashions tailor-made for producing and auditing sensible contract code, addressing the rising want for safe and environment friendly blockchain purposes.

As IQ instructed CryptoSlate, NaïveJudge presents a novel method by tasking LLMs with implementing sensible contracts based mostly on detailed specs derived from audited OpenZeppelin contracts. These contracts present a gold normal for correctness and effectivity. The generated code is evaluated in opposition to a reference implementation utilizing standards similar to purposeful completeness, adherence to Solidity greatest practices and safety requirements, and optimization effectivity.

The analysis course of leverages superior LLMs, together with totally different variations of OpenAI’s GPT-4 and Claude 3.5 Sonnet as neutral code reviewers. They assess the code based mostly on rigorous standards, together with implementing all key functionalities, dealing with edge instances, error administration, correct syntax utilization, and total code construction and maintainability.

Optimization concerns similar to fuel effectivity and storage administration are additionally evaluated. Scores vary from 0 to 100, offering a complete evaluation throughout performance, safety, and effectivity, mirroring the complexities {of professional} sensible contract growth.

Which AI fashions are greatest for solidity sensible contract growth?

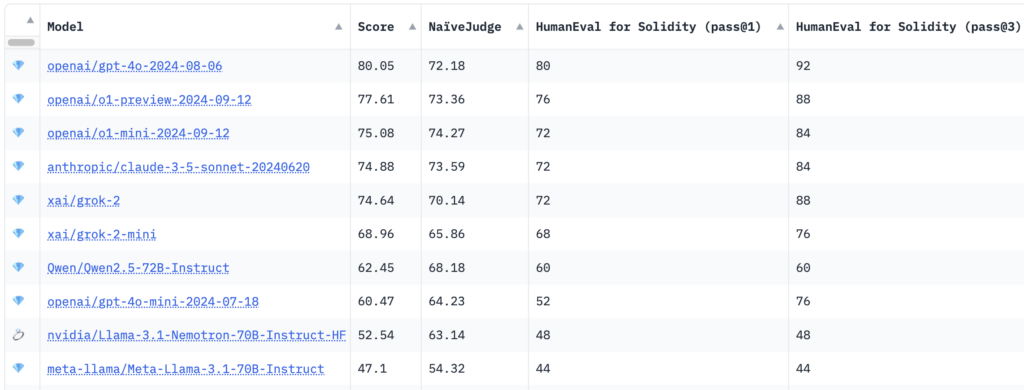

Benchmarking outcomes confirmed that OpenAI’s GPT-4o mannequin achieved the very best total rating of 80.05, with a NaïveJudge rating of 72.18 and HumanEval for Solidity move charges of 80% at move@1 and 92% at move@3.

Curiously, newer reasoning fashions like OpenAI’s o1-preview and o1-mini had been crushed to the highest spot, scoring 77.61 and 75.08, respectively. Fashions from Anthropic and XAI, together with Claude 3.5 Sonnet and grok-2, demonstrated aggressive efficiency with total scores hovering round 74. Nvidia’s Llama-3.1-Nemotron-70B scored lowest within the prime 10 at 52.54.

Per IQ, HumanEval for Solidity adapts OpenAI’s authentic HumanEval benchmark from Python to Solidity, encompassing 25 duties of various issue. Every process consists of corresponding assessments appropriate with Hardhat, a well-liked Ethereum growth atmosphere, facilitating correct compilation and testing of generated code. The analysis metrics, move@1 and move@3, measure the mannequin’s success on preliminary makes an attempt and over a number of tries, providing insights into each precision and problem-solving capabilities.

Objectives of using AI fashions in sensible contract growth

By introducing these benchmarks, SolidityBench seeks to advance AI-assisted sensible contract growth. It encourages the creation of extra subtle and dependable AI fashions whereas offering builders and researchers with useful insights into AI’s present capabilities and limitations in Solidity growth.

The benchmarking toolkit goals to advance IQ Code’s EVMind LLMs and in addition units new requirements for AI-assisted sensible contract growth throughout the blockchain ecosystem. The initiative hopes to deal with a crucial want within the trade, the place the demand for safe and environment friendly sensible contracts continues to develop.

Builders, researchers, and AI fans are invited to discover and contribute to SolidityBench, which goals to drive the continual refinement of AI fashions, promote greatest practices, and advance decentralized purposes.

Go to the SolidityBench leaderboard on Hugging Face to be taught extra and start benchmarking Solidity era fashions.